By Patrick Henningsen

Source: Waking Times

Much has been written about the approaching Police State in alternative media. Commentary ranges from various warnings, to shock and outrage, and fear over an impending martial law takeover in North America and Western Europe. It’s hitting us from so many different angles, and yet the mainstream conversation continues to be woefully inadequate in both characterising the situation and offering a remedy.

In order to really understand the modern Police State, we need to explore some very profound and difficult questions. Many people who consider themselves aware think Western society has already reached the tipping point and the deteriorating situation is simply inevitable. If you feel like Winston Smith right about now you aren’t alone.

Prior to the mid 1990s, one might have described the militarisation of public law enforcement something of a creeping paradigm, but one that was still a long way off. Society explored many aspects of the Police State, both the physical and Orwellian psychological scenario, through literature and film. American science fiction writer Philip K. Dick penned some significant works like The Minority Report, and cinematic hits like Paul Verhoeven’s Robocop and Terry Gilliam’s Brazil also explored what this dystopic, future vision of fascist technocracy might look like. As it turned out, and far from fantasy, countless devices, systems and themes depicted in so many of these supposedly ‘fictional’ classics have since made their way into our day to day lives. The dark dream became real.

Unfortunately, as humanity’s freshmen class of the early 21st century, we can no longer afford the intellectual distance enjoyed by previous generations between life today and that blurry, far-off spectre of something that might arrive sometime at some point in the future.

Any modern globalised Police State requires a social engineering framework in order to provide its shape and scope of law enforcement. The latest social engineering blueprint for global technocratic management was unveiled at this year’s 70th United Nations General Assembly in New York City. Their ‘new’ agenda (newer than the old one) entitled, Agenda 2030,1 hopes to “transform our world for the better by 2030.” Author Michael Snyder from the blog ‘End of The American Dream’ explains: “The entire planet is going to be committing to work toward 17 sustainable development goals and 169 specific sustainable development targets, and yet there has been almost a total media blackout about this…”2

Within its 17 ‘universal goals’, the actual Police State provision for Agenda 2030 can be found within Goal 11, which states how the new global government will, “Make cities and human settlements inclusive, safe, resilient and sustainable.” Translated in technocracy terms, this means more Big Brother tech, smart grid tracking and big data surveillance states.

The age of computerisation and database integration, along with advances in military and crowd control technology perfected overseas, have enabled a sharp advance toward the Police State. Trying to make sense of ‘it’ is a major challenge, to say the least. In its totality, the control system is both multifaceted and multilayered. It may have been possible to describe it, or even define it 20, 30, or 40 years ago, as Philip K. Dick and so many others did. Today, as society has already eclipsed the possible, we face a situation whereby the very thing we are trying to describe is woven through nearly every fabric of modern social, professional, family, religious and political life.

If you happen to live in one of the technocratic nations, you can’t opt out, nor can you fully repeal the advances already made by the control system. What other options are available?

Firstly, we have to try and understand, from an economic, cultural and political perspective at least, how this control system came to be.

What are its strongest areas? Can we reform those areas? Where is it still emerging? Cannot those areas be slowed down? What was the political climate that enabled it?

How to Build a Police State

When you observe a modern Police State, the first things you might notice will not necessarily be the batons, shield, helmets or MRAPs. Think Switzerland or Singapore. A modern Police State will be neat, clean and efficient. Retail zones will be shiny and feature all the top designer brands. Many of the people you see in public will be well-groomed, well-healed and beautiful, but often with only one political party and a strict public code.

Just like admirers of the modern Chinese State, Singapore’s proponents refer to the single party State as “a great argument for Authoritarianism.” Order and civility rule the day, so long as you don’t fall foul of the narrow perimeters set by the State.

What has been accomplished in Southeast Asia since 1965, and what is possible in previously ‘free’ countries like the US, UK and Australia, are two very different social and political evolutions. Still, the modern Police State is advancing globally and it’s being driven primarily by three factors: technology, for-profit industry, and an age-old obsession by the ruling class to manage the masses.

The first and easiest area to challenge is the physical realm of the control system. The most obvious of these are the gadgets and toys. They are easy to see. Look at your local police department and notice the difference between what officers looked like and what they wore in the 1970s, 1980s, 1990s and now in the 21st century. Notice the firearms and tasers, the ‘Bat-Belts’, and now the body cameras. Your average officer today looks like a cross between a soldier and an android. Dress them like robots and don’t be surprised when they act like machines (and it won’t be long until many of them are replaced by machines).

If you’ve ever attended a street protest or witnessed some civil unrest, then you’ll have noticed the high-tech body armour, the riot and ‘crowd suppression’ equipment.

My first intense experience where I felt the full force of the modern Police State was in 2009, at the G20 Protests in the City of London, England. It was early in the evening and approximately 4,000 demonstrators suddenly found themselves trapped at Bishopsgate. Several hundred police officers on foot and horseback had blocked all the entrances and egresses in and out of the main road. Even alleyways were manned by riot police. Then police began charging the crowds, and beating protesters with clubs. They alternated their ‘surge’ efforts, from different ends of the street, north to south, one brutal flurry after another. The worst part about it was there was no escape route away from the police. Many were beaten and trampled on that evening. It was as if police planners were playing a video game.

Finally, at around 9pm, after being forced to stand, surrounded by police in a ‘Kettle’ for nearly three hours, along with 500 other demonstrators and press, who spent most of that time pressed up against police shields and not knowing what would happen next – I realised this is an impersonal, disinterested and totally uncompromising machine. It does not care who you are, what your views and opinions are, or whether you were innocent or guilty. The lesson was simple: “next time, stay home.” The only detail this machine is concerned with is that you comply with orders, and if no orders are given, then the machine demands you stay where you are until the machine decides what to do with you. If you complain too much, or become emotional, or heaven forbid act out in any way, then the machine will move in to subdue and detain you. That is all there is to it.

Big Brother Reality

It’s well-known that Great Britain is home of the world’s largest and most sophisticated physical Police State, including tens of millions of closed circuit television (CCTV) cameras, covering every conceivable inch of habitable space, both indoors and outdoors. The CCTV phenomenon in Britain was fuelled by an obsession with cameras that became increasingly popular with both government and corporate technocrats in the 1980s and 1990s. The psychology behind the exponential proliferation in cameras was mainly a fairly crude bit of criminology which held that the cameras would somehow act as a deterrent to criminal behaviour, and thus subdue the feral population into a more docile state. Industry used this line too, as sales persons were deployed en masse with endless flip charts and statistical models that claimed CCTV cameras would prevent the UK’s spiralling social malaise.

The only problem is that more cameras don’t equal less crime. Canadian writer Cory Doctorow observed this reality back in 2011, explaining: “After all, that’s how we were sold on CCTV – not mere forensics after the fact, but deterrence. And although study after study has concluded that CCTVs don’t deter most crime (a famous San Francisco study showed that, at best, street crime shifted a few metres down the pavement when the CCTV went up), we’ve been told for years that we must all submit to being photographed all the time because it would keep the people around us from beating us, robbing us, burning our buildings and burglarising our homes.”3

The CCTV is only one single aspect of Big Brother. It turns out that the real value of the CCTV camera grid is not so much the monitoring of crime per se, as it is in mass applied behavioural psychology.

The Panopticon

The physical Police State could not exist without some philosophical underpinning. Before Orwell, there was Bentham…

In the mid 19th century Britain developed a new style of prison architecture known as the ‘Panopticon’ under the aegis of utilitarian philosopher Jeremy Bentham.4 The unique feature of this Panopticon concept was the transparent nature of each prisoner cell, visible to a central surveillance guard tower that could eye inmates at all times. The result of this psychological experiment, according to the pragmatic Benthamite philosophy, was to produce a regime of “self-policing” amongst the inmates, a kind of early behavioural conditioning. For technocrats and emerging utilitarian social managers of that era, this was seen as the most economic and efficient solution. Ultimately, this Benthamite concept is what underpinned phase one of the mass CCTV deployment throughout the UK. Sitting well above the security minions and the industry profiteers, elite scholars knew full well that CCTV cameras do not stop crime.

The real power of the Panopticon is in convincing the general population they are under constant surveillance. After that point, through a long-term process of nudging, diversions and scare tactics, the State gradually moulds the behaviour and thoughts of its subjects.

In order to keep citizens locked into this new conscious state of fear and trepidation, the State needs anenemy…

The Long War & ‘The Extremist’

One of the chief campaigns to nudge society towards a fully-functional Orwellian State is the War on Terror. Ever since September 11, 2001, the concept of an endless war against the ‘terrorists’ – a seemingly ubiquitous and constantly shape-shifting enemy – has been used to justify nearly every large new security expenditure and policy. Back in 2006, US President George W. Bush’s chief architect of the ‘long war’, Secretary of Defense Donald Rumsfeld, laid out the tea leaves for the next 100 years, stating: “It does not have to do with deployment of US military forces, necessarily. It has to do with the struggle that’s taking place within that faith between violent extremists – a small number of them, relatively – who are capable of going out and killing a great many people, as they’re doing, and the overwhelming majority of that religion that does not believe in violent extremism or terrorism.”5

In George Orwell’s classic novel 1984, Winston Smith also grappled with the State’s endless war. “Oceania was at war with Eurasia: therefore Oceania had always been at war with Eurasia.”

In Oceania, people eventually forgot what started the long war. The news was just one terrorist attack after another. They enemy was everywhere, but nowhere too. The population learned to acquiesce to the idea that war was the permanent state of affairs, and that questioning the provenance of this idea was futile.

“Winston could not definitely remember a time when his country had not been at war, but it was evident that there had been a fairly long interval of peace during his childhood, because one of his early memories was of an air raid, which appeared to take everyone by surprise. Perhaps it was the time when the atomic bomb had fallen on Colchester. He did not remember the raid itself.”

And so it was, in the early moments of the 21st century, Orwell’s dream suddenly became a waking reality. Social engineers are firm believers that if the Panopticon (married with the threat of an invisible enemy) can remain in place for a generation, then the State could fundamentally change a once free-thinking society into something noticeably different – a much more fearful and compliant populace.

The Social Media Panopticon

As terror scares and attacks become somewhat of a daily event in the West, identifying and quarantining the ‘extremist’ becomes a primary fetish of the Police State and its media arms. This is very much evident in how terrorists and ‘active shooters’ (dead or alive) are now profiled after the event. The mainstream media has integrated this into its work practice by crafting the post hoc guilty verdict of the accused, prior to a trial, with circumstantial or non sequitur accusations based on an individual’s “web history” that may have “radicalised” the suspect. In effect, the mainstream media’s function as an establishment propaganda arm results in trial by media – the bypassing of any trial by jury as the accused have already been implicitly or explicitly declared guilty by association or something as nebulous as “web history.”

Such incidents, as they are portrayed in the media for psychological conditioning purposes, are intended to cause the public mind to dismiss outdated notions of fair and due process and rule of law in favour of fiat corporate news and government “official” pronouncements. The net effect of this trend is that social media users, ie. the majority of the population, are adopting self-policing habits in their communications online. According to the principals of applied behavioural psychology, if you change the language people use, then eventually you change the way they think and act.

Like Bentham’s Panopticon, this new social media monitoring system works by utilising the digital web, which is arguably the most economic and efficient solution. The acceptance of self-policing and vague terms such as “radicalised” that are subject to the increasingly elastic definitions of the social engineering establishment.

This leads to one of the most profound questions one might ask in the wake of Edward Snowden’s NSA spying revelations: Knowing what we know now, are people more outspoken or are they more self-policing because of the Snowden leaks?

‘The Daily Shooter’

By extension, once the technocrat has regained some modicum of physical control, then the next domain to be conquered is the mind. In 1984, the technocracy was viewed through the eyes of the protagonist Winston Smith, who while remaining a physical prisoner of the Police State, could still retreat into his own mental state.

In our day, the expansion of the surveillance State and vast spying by the likes of the NSA and GCHQ are precisely intended to achieve this same effect, with the justification for such intrusions being an endless series of terror spectacles and lone wolf public shooting events. In the US, these mass shootings and terror scares are happening on an almost daily basis, hence, ‘The Daily Shooter’. Media coverage is both chaotic and relentless. As a result, the pubic are left stupefied and completely unable to challenge whatever narrative the government-media complex is selling at that time. The Police State marches forward.

A similar psychodrama also played out for 1984’s protagonist Winston Smith. As time progressed, however, maintaining some level of autonomy in one’s own thoughts became increasingly difficult for Winston. The final objective of the Police State, it seemed, was not only to fundamentally transform the way citizens act, but how they think too. The all-seeing and all-controlling “Big Brother” State was also the de facto social authority figure. The State’s law enforcement police force also became the “thought police.”

We see this same exact narrative playing out today as the State’s political figureheads continue in their mission to widen their definition of “extremism” along with other State-issued euphemisms used to describe citizens who should be regarded with suspicion.

Fall out of line and you might even be segregated or sent away to a special camp. Following the recent mass shooting in Chattanooga, Tennessee, retired US General and NATO Commander Wesley Clark proposed that any “disloyal Americans” should be sent to internment camps for the “duration of the conflict.” Notice the language: “for the duration of the conflict.” Indeed, it seems that Oceania is at war. He went even further, calling for the US government to identify people most likely to be “radicalised” so we can “cut this off at the beginning.”

“At the beginning?” Here, it seems Clark might be alluding to pre-crime, which will be powered by A.I…

Artificial Intelligence

Post-September 11, UK society was still hooked on their CCTV matrix, and with millions of cameras already in place and crime continuing to rise, security ‘experts’ and politicians simply doubled down on their previous wager, insisting that what the country really needed was more cameras. They believed that once a certain CCTV saturation was reached, by default they would somehow reached their twisted utopia.

It turned out that’s not humanly possible for security workers, most of whom are on a mere £7-10 (aud$14-20) per hour, to keep track, let alone analyse, a seemingly endless stream of footage. For the technocrat, the operative word here is ‘humanly’. Enter A.I…

Once again, advanced technology enters the narrative and supplies the solution to this previous insurmountable problem. The age of Artificial Intelligence, or A.I., is nearly upon us, and this next step in technological development is certain to radically change the entire concept of the Police State.

Laying down the framework an A.I. grid is not easy because the grid must be designed to cope with the application of A.I. As A.I.’s potential and practical applications have not yet been fully realised, designing the grid upon which it will be unleashed has been problematic up to this point. Sadly, society on the whole appears disinterested in questioning the social and unethical imperative currently driving the adoption of these new technologies.

At present, the big money is on the Smart Grid. Technocrats and their corporate partners are hoping to usher in their new surveillance grid under the auspices of ‘smart’ technologies. With A.I. in play, technocrats will be able to utilise the smart grid – which includes your mobile phone – to detect and track multiple targets over a wide area.6 Add facial recognition and data profiling to the mix and it’s a recipe for a full-on A.I. Smart Grid future. The ultimate hands-free, ‘surveillance selfie’ – compliments of Big Brother.

Just imagine, one day you’re simply walking down the street and pointing to something in the air. All of it is being captured on a 1.8 billion pixel video stream from the sky. They already know your identity and location with the phone in your pocket, and they already have your face logged and tracked.7

At this point we introduce Philip K. Dick’s concept of “pre-crime” whereby an A.I. system can predict an action you are likely to take.8 The system will then close the ‘Big Data’ loop by storing the video footage alongside your profile into a massive data ‘mash-up’. It will then compare with other potentially ‘suspicious’ activity in the area. Great Britain’s national police force, the Metropolitan Police, are already using a type of pre-crime software that British technocrats believe will somehow ‘revolutionalise’ modern policing in the 21st century.9

UK consumer advocate Pippa King explains how CCTV is already being phased out: “CCTV, closed circuit television, is not quite what is operating on our streets today. What we have now is IPTV, an internet protocol television network that can relay images to analytical software that uses algorithms to determine pre-crime area in real time.”

“Currently this AI looks at areas that may be targeted for crimes such as burglaries or joyriding,10 with the predicted hotspot information being sent direct to law enforcement smart phones in the field. This analytical software is being used in Glasgow, hailed as Britain’s first ‘smart city’,11 where the Israeli security firm NICE Systems are running the CCTV/IPTV network, analysing data from the 442 fixed HD surveillance cameras and 30 mobile units under a project called ‘Community Safety Glasgow’,12 whose primary objectives are described as ‘delivering Glasgow a more efficient traffic management system, identifying crime in the city and tracking individuals’.”13

This all can happen thanks to the US Defense Advanced Research Projects Agency’s (DARPA) latest creation – the ARGUS camera, Autonomous Real-Time Ground Ubiquitous Surveillance.14 According to its designers ARGUS, “melds together video from each of its 368 chips to create a 1.8 billion pixel video stream” all in real-time and archived. It’s just one of the many new toys used by the State to realise its Orwellian ambitions.

Who’s Paying For It All?

Aside from its ability to trample over the rights of law abiding citizens, the Police State has one other chief characteristic which may also be its Achilles heal: it’s bankrupting the State. Here’s how it works:

The gravy chain is endless, but only with the help of taxpayers’ money, along with a series of bribes and favours between politicians and corporates. If you have ‘friends’ in government administration, then you are more likely to cash in on any number of lucrative ‘domestic defense’ contracts.

Where you have constant crisis you also have constant business opportunity. In this dark paradigm, timing is everything. As US President Barack Obama’s sociopathic15 former chief of staff, now Mayor of Chicago, Rahm Emmanuel, once said:

“You never let a serious crisis go to waste. And what I mean by that it’s an opportunity to do things you think you could not do before.”

With that mantra in mind, in the wake of any shooting, terror scare, or crisis, industrial lobbyists and their elected political gophers will waste no time pushing for new federally-funded add-ons like training courses, workplace psychologists, regulators, specialist contractors, police cameras and other big-ticket items16 – anything to help “solve the crisis.” One such program in the US is known simply as the ‘1033’.

Joseph Lemieux writes:

“The 1033 program has flooded our local police forces with military equipment, and has turned them from Peace Officers, to a domestic army.”

“Officers stopped looking like officers, and more like soldiers all kitted out with fully automatic weapons, armoured vehicles, body armour, grenades launchers, night vision, and even bayonets! Besides the cost of liberty, how much has this domestic army cost you the tax payer?”17

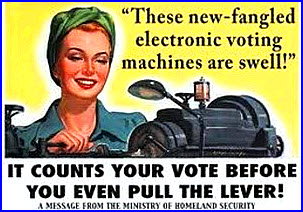

In the US, no single entity embodies the Police State gravy train more than the Department of Homeland Security (DHS), where federal grants are used to bribe local law enforcement and absorb them into a larger framework of institutional dependency.

At over $200 billion per year, the DHS is now America’s most expensive federal agency. As any sane local law enforcement chief will tell you, once you smoke from the federal crack pipe, you’re hooked for life. Remember that each federal Police State agenda item has a lucrative contract attached to it. With each move central government makes, a large amount of money is also made (by someone).

By cutting off public money that is driving the runaway federal Police State in Western countries, the people have a chance to mitigate and potentially reform the current agenda.

If we hope to preserve what is left of our hard fought democracy, then now is the time to put it to the test. The alternative is unthinkable.

About the Author

Patrick Henningsen is an independent investigative reporter, editor, and journalist. A native of Omaha, Nebraska and a graduate of Cal Poly San Luis Obispo in California, he is currently based in London, England and is the managing editor of 21st Century Wire – News for the Waking Generation (www.21stCenturyWire.com) which covers exposés on intelligence, geopolitics, foreign policy, the war on terror, technology and Wall Street. Patrick is a regular commentator on Russia Today.

Footnotes:

- ‘Transforming our world: the 2030 Agenda for Sustainable Development’,https://sustainabledevelopment.un.org/post2015/transforming

ourworld - ‘The 2030 Agenda: This Month The UN Launches A Blueprint For A New World Order With The Help Of The Pope’ by Michael Snyder, 2 Sept 2015, http://endoftheamericandream.com/archives/the-2030-agenda-this-month-the-un-launches-a-blueprint-for-a-new-world-order-with-the-help-of-the-pope

- ‘Why CCTV has failed to deter criminals’ by Cory Doctorow, The Guardian, 17 August 2011

- www.ucl.ac.uk/Bentham-Project/who/panopticon

- www.sourcewatch.org/index.php/The_Long_War

- ‘Bilderberg 2015: Implementation of the A.I. Grid’ by Jay Dyer, 21st Century Wire (www.21stcenturywire.com), 14 June 2015

- ‘Britain Launches “Big Brother” System, Uploads One Third of Population to Facial Recognition Database’, 21st Century Wire, 3 Feb 2015

- ‘Already Underway: Smart A.I. Running Our Police and Cities’ by Pippa King, 21st Century Wire, 13 Mar 2015

- ‘British Police Roll Out New “Precrime” Software to Catch Would-Be Criminals’, 21st Century Wire, 13 Mar 2015

- ‘Pre-crime software recruited to track gang of thieves’ by Chris Baraniuk, New Scientist, 11 Mar 2015

- ‘Glasgow wins “smart city” government cash’, BBC News, www.bbc.com/news/technology-21180007

- www.saferglasgow.com

- ‘Already Underway: Smart A.I. Running Our Police and Cities’, op.cit.

- www.darpa.mil/program/autonomous-real-time-ground-ubiquitous-surveillance-infrared

- ‘The Two Sides of Rahm Emanuel: Sociopathic Political Hitman and Puppy Lover’ by Foster Kamer, 16 Aug 2009, gawker.com

- ‘Mayor de Blasio Announces Retraining of New York Police’ by Marc Santoradec, The New York Times,4 Dec 2014

- ‘How Much Money Have American Taxpayers Spent on Building a Domestic Police State?’ by Joseph Lemieux, 1 Dec 2014, http://theantimedia.org/taxpayers-police-state/

The above article appeared in New Dawn 153 (Nov-Dec 2015)