Employers exercise vast control over our lives, even when we’re not on the job. How did our bosses gain power that the government itself doesn’t hold?

By Miya Tokumitsu

Source: New Republic

Work no longer works. “You need to acquire more skills,” we tell young job seekers whose résumés at 22 are already longer than their parents’ were at 32. “Work will give you meaning,” we encourage people to tell themselves, so that they put in 60 hours or more per week on the job, removing them from other sources of meaning, such as daydreaming or social life. “Work will give you satisfaction,” we insist, even though it requires abiding by employers’ rules, and the unwritten rules of the market, for most of our waking hours. At the very least, work is supposed to be a means to earning an income. But if it’s possible to work full time and still live in poverty, what’s the point?

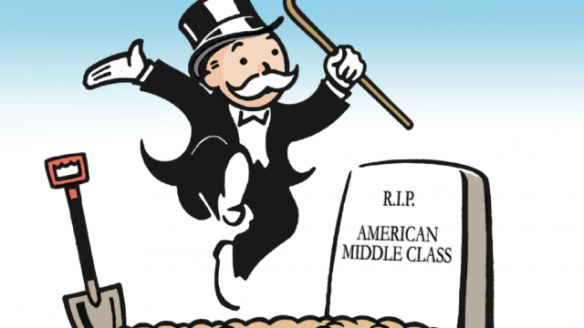

Even before the global financial crisis of 2008, it had become clear that if waged work is supposed to provide a measure of well-being and social structure, it has failed on its own terms. Real household wages in the United States have remained stagnant since the 1970s, even as the costs of university degrees and other credentials rise. Young people find an employment landscape defined by unpaid internships, temporary work, and low pay. The glut of degree-holding young workers has pushed many of them into the semi- or unskilled labor force, making prospects even narrower for non–degree holders. Entry-level wages for high school graduates have in fact fallen. According to a study by the Federal Reserve Bank of New York, these lost earnings will depress this generation’s wages for their entire working lives. Meanwhile, those at the very top—many of whom derive their wealth not from work, but from returns on capital—vacuum up an ever-greater share of prosperity.

Against this bleak landscape, a growing body of scholarship aims to overturn our culture’s deepest assumptions about how work confers wealth, meaning, and care throughout society. In Private Government: How Employers Rule Our Lives (and Why We Don’t Talk About It), Elizabeth Anderson, a professor of philosophy at the University of Michigan, explores how the discipline of work has itself become a form of tyranny, documenting the expansive power that firms now wield over their employees in everything from how they dress to what they tweet. James Livingston, a historian at Rutgers, goes one step further in No More Work: Why Full Employment Is a Bad Idea. Instead of insisting on jobs for all or proposing that we hold employers to higher standards, Livingston argues, we should just scrap work altogether.

Livingston’s vision is the more radical of the two; his book is a wide-ranging polemic that frequently delivers the refrain “Fuck work.” But in original ways, both books make a powerful claim: that our lives today are ruled, above all, by work. We can try to convince ourselves that we are free, but as long as we must submit to the increasing authority of our employers and the labor market, we are not. We therefore fancy that we want to work, that work grounds our character, that markets encompass the possible. We are unable to imagine what a full life could be, much less to live one. Even more radically, both books highlight the dramatic and alarming changes that work has undergone over the past century—insisting that, in often unseen ways, the changing nature of work threatens the fundamental ideals of democracy: equality and freedom.

Anderson’s most provocative argument is that large companies, the institutions that employ most workers, amount to a de facto form of government, exerting massive and intrusive power in our daily lives. Unlike the state, these private governments are able to wield power with little oversight, because the executives and boards of directors that rule them are accountable to no one but themselves. Although they exercise their power to varying degrees and through both direct and “soft” means, employers can dictate how we dress and style our hair, when we eat, when (and if) we may use the toilet, with whom we may partner and under what arrangements. Employers may subject our bodies to drug tests; monitor our speech both on and off the job; require us to answer questionnaires about our exercise habits, off-hours alcohol consumption, and childbearing intentions; and rifle through our belongings. If the state held such sweeping powers, Anderson argues, we would probably not consider ourselves free men and women.

Employees, meanwhile, have few ways to fight back. Yes, they may leave the company, but doing so usually necessitates being unemployed or migrating to another company and working under similar rules. Workers may organize, but unions have been so decimated in recent years that their clout is greatly diminished. What’s more, employers are swift to fire anyone they suspect of speaking to their colleagues about organizing, and most workers lack the time and resources to mount a legal challenge to wrongful termination.

It wasn’t supposed to be this way. As corporations have worked methodically to amass sweeping powers over their employees, they have held aloft the beguiling principle of individual freedom, claiming that only unregulated markets can guarantee personal liberty. Instead, operating under relatively few regulations themselves, these companies have succeeded at imposing all manner of regulation on their employees. That is to say, they use the language of individual liberty to claim that corporations require freedom to treat workers as they like.

Anderson sets out to discredit such arguments by tracing them back to their historical origins. The notion that personal freedom is rooted in free markets, for instance, originated with the Levellers in seventeenth-century England, when working conditions differed substantially from today’s. The Levellers believed that a market society was essential to liberate individuals from the remnants of feudal hierarchies; their vision of utopia was a world in which men could meet and interact on terms of equality and dignity. Their ideas echoed through the writing and politics of later figures like John Locke, Adam Smith, Thomas Paine, and Abraham Lincoln, all of whom believed that open markets could provide the essential infrastructure for individuals to shape their own destiny.

An anti-statist streak runs through several of these thinkers, particularly the Levellers and Paine, who viewed markets as the bulwark against state oppression. Paine and Smith, however, would hardly qualify as hard-line contemporary libertarians. Smith believed that public education was essential to a fair market society, and Paine proposed a system of social insurance that included old-age pensions as well as survivor and disability benefits. Their hope was not for a world of win-or-die competition, but one in which open markets would allow individuals to make the fullest use of their talents, free from state monopolies and meddlesome bosses.

For Anderson, the latter point is essential; the notion of lifelong employment under a boss was anathema to these earlier visions of personal freedom. Writing in the 1770s, Smith assumes that independent actors in his market society will be self-employed, and uses butchers and bakers as his exemplars; his “pin factory,” meant to illustrate division of labor, employs only ten people. These thinkers could not envision a world in which most workers spend most of their lives performing wage labor under a single employer. In an address before the Wisconsin State Agricultural Society in 1859, Lincoln stated, “The prudent, penniless beginner in the world labors for wages awhile, saves a surplus with which to buy tools or land for himself, then labors on his own account another while, and at length hires another new beginner to help him.” In other words, even well into the nineteenth century, defenders of an unregulated market society viewed wage labor as a temporary stage on the way to becoming a proprietor.

Lincoln’s scenario does not reflect the way most people work today. Yet the “small business owner” endures as an American stock character, conjured by politicians to push through deregulatory measures that benefit large corporations. In reality, thanks to a lack of guaranteed, nationalized health care and threadbare welfare benefits, setting up a small business is simply too risky a venture for many Americans, who must rely on their employers for health insurance and income. These conditions render long-term employment more palatable than a precarious existence of freelance gigs, which further gives companies license to oppress their employees.

The modern relationship between employer and employee began with the rise of large-scale companies in the nineteenth century. Although employment contracts date back to the Middle Ages, preindustrial arrangements bore little resemblance to the documents we know today. Like modern employees, journeymen and apprentices often served their employers for years, but masters performed the same or similar work in proximity to their subordinates. As a result, Anderson points out, working conditions—the speed required of workers and the hazards to which they might be exposed—were kept in check by what the masters were willing to tolerate for themselves.

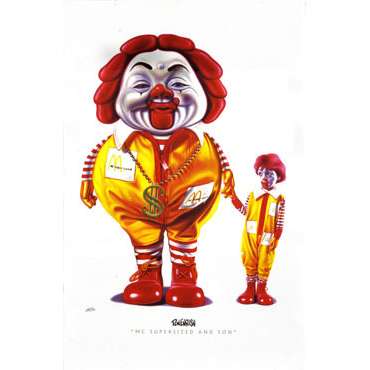

The Industrial Revolution brought radical changes, as companies grew ever larger and management structures more complex. “Employers no longer did the same kind of work as employees, if they worked at all,” Anderson observes. “Mental labor was separated from manual labor, which was radically deskilled.” Companies multiplied rapidly in size. Labor contracts now bonded workers to massive organizations in which discipline, briefs, and decrees flowed downward, but whose leaders were unreachable by ordinary workers. Today, fast food workers or bank tellers would be hard-pressed to petition their CEOs at McDonald’s or Wells Fargo in person.

Despite this, we often speak of employment contracts as agreements between equals, as if we are living in Adam Smith’s eighteenth-century dream world. In a still-influential paper from 1937 titled “The Nature of the Firm,” the economist and Nobel laureate Ronald Coase established himself as an early observer and theorist of corporate concerns. He described the employment contract not as a document that handed the employer unaccountable powers, but as one that circumscribed those powers. In signing a contract, the employee “agrees to obey the directions of an entrepreneur within certain limits,” he emphasized. But such characterizations, as Anderson notes, do not reflect reality; most workers agree to employment without any negotiation or even communication about their employer’s power or its limits. The exceptions to this rule are few and notable: top professional athletes, celebrity entertainers, superstar academics, and the (increasingly small) groups of workers who are able to bargain collectively.

Yet because employment contracts create the illusion that workers and companies have arrived at a mutually satisfying agreement, the increasingly onerous restrictions placed on modern employees are often presented as “best practices” and “industry standards,” framing all sorts of behaviors and outcomes as things that ought to be intrinsically desired by workers themselves. Who, after all, would not want to work on something in the “best” way? Beyond employment contracts, companies also rely on social pressure to foster obedience: If everyone in the office regularly stays until seven o’clock every night, who would risk departing at five, even if it’s technically allowed? Such social prods exist alongside more rigid behavioral codes that dictate everything from how visible an employee’s tattoo can be to when and how long workers can break for lunch.

Many workers, in fact, have little sense of the legal scope of their employer’s power. Most would be shocked to discover that they could be fired for being too attractive, declining to attend a political rally favored by their employer, or finding out that their daughter was raped by a friend of the boss—all real-life examples cited by Anderson. Indeed, it is only after dismissal for such reasons that many workers learn of the sweeping breadth of at-will employment, the contractual norm that allows American employers to fire workers without warning and without cause, except for reasons explicitly deemed illegal.

In reality, the employment landscape is even more dire than Anderson outlines. The rise of staffing or “temp” agencies, for example, undercuts the very idea of a direct relationship between worker and employer. In The Temp Economy: From Kelly Girls to Permatemps in Postwar America, sociologist Erin Hatton notes that millions of workers now labor under subcontracting arrangements, which give employers even greater latitude to abuse employees. For years, Walmart—America’s largest retailer—used a subcontracting firm to hire hundreds of cleaners, many from Eastern Europe, who worked for months on end without overtime pay or a single day off. After federal agents raided dozens of Walmarts and arrested the cleaners as illegal immigrants, company executives used the subcontracting agreement to shirk responsibility for their exploitation of the cleaners, claiming they had no knowledge of their immigration status or conditions.

By any reasonable standard, much “temp” work is not even temporary. Employees sometimes work for years in a single workplace, even through promotions, without ever being granted official status as an employee. Similarly, “gig economy” platforms like Uber designate their workers as contractors rather than employees, a distinction that exempts the company from paying them minimum wage and overtime. Many “permatemps” and contractors perform the same work as employees, yet lack even the paltry protections and benefits awarded to full-time workers.

A weak job market, paired with the increasing precarity of work, means that more and more workers are forced to make their living by stringing together freelance assignments or winning fixed-term contracts, subjecting those workers to even more rules and restrictions. On top of their actual jobs, contractors and temp workers must do the additional work of appearing affable and employable not just on the job, but during their ongoing efforts to secure their next gig. Constantly pitching, writing up applications, and personal branding on social media requires a level of self-censorship, lest a controversial tweet or compromising Facebook photo sink their job prospects. Forced to anticipate the wishes not of a specific employer, but of all potential future employers, many opt out of participating in social media or practicing politics in any visible capacity. Their public personas are shaped not by their own beliefs and desires, but by the demands of the labor market.

For Livingston, it’s not just employers but work itself that is the problem. We toil because we must, but also because our culture has trained us to see work as the greatest enactment of our dignity and personal character. Livingston challenges us to turn away from such outmoded ideas, rooted in Protestant ideals. Like Anderson, he sweeps through centuries of labor theory with impressive efficiency, from Marx and Hegel to Freud and Lincoln, whose 1859 speech he also quotes. Livingston centers on these thinkers because they all found the connection between work and virtue troubling. Hegel believed that work causes individuals to defer their desires, nurturing a “slave morality.” Marx proposed that “real freedom came after work.” And Freud understood the Protestant work ethic as “the symptom of repression, perhaps even regression.”

Nor is it practical, Livingston argues, to exalt work: There are simply not enough jobs to keep most adults employed at a living wage, given the rise of automation and increases in productivity. Besides, the relation between income and work is arbitrary. Cooking dinner for your family is unpaid work, while cooking dinner for strangers usually comes with a paycheck. There’s nothing inherently different in the labor involved—only in the compensation. Anderson argues that work impedes individual freedom; Livingston points out that it rarely pays enough. As technological advances continue to weaken the demand for human labor, wages will inevitably be driven down even further. Instead of idealizing work and making it the linchpin of social organization, Livingston suggests, why not just get rid of it?

Livingston belongs to a cadre of thinkers, including Kathi Weeks, Nick Srnicek, and Alex Williams, who believe that we should strive for a “postwork” society in one form or another. Strands of this idea go back at least as far as Keynes’s 1930 essay on “Economic Possibilities for our Grandchildren.” Not only would work be eliminated or vastly reduced by technology, Keynes predicted, but we would also be unburdened spiritually. Devotion to work was, he deemed, one of many “pseudo-moral principles” that “exalted some of the most distasteful of human qualities into the position of the highest virtues.”

Since people in this new world would no longer have to earn a salary, they would, Livingston envisions, receive some kind of universal basic income. UBI is a slippery concept, adaptable to both the socialist left and libertarian right, but it essentially entails distributing a living wage to every member of society. In most conceptualizations, the income is indeed basic—no cases of Dom Pérignon—and would cover the essentials like rent and groceries. Individuals would then be free to choose whether and how much they want to work to supplement the UBI. Leftist proponents tend to advocate pairing UBI with a strong welfare state to provide nationalized health care, tuition-free education, and other services. Some libertarians view UBI as a way to pare down the welfare state, arguing that it’s better simply to give people money to buy food and health care directly, rather than forcing them to engage with food stamp and Medicaid bureaucracies.

According to Livingston, we are finally on the verge of this postwork society because of automation. Robots are now advanced enough to take over complex jobs in areas like agriculture and mining, eliminating the need for humans to perform dangerous or tedious tasks. In practice, however, automation is a double-edged sword, with the capacity to oppress as well as unburden. Machines often accelerate the rate at which humans can work, taxing rather than liberating them. Conveyor belts eliminated the need for workers to pass unfinished products along to their colleagues—but as Charlie Chaplin and Lucille Ball so hilariously demonstrated, the belts also increased the pace at which those same workers needed to turn wrenches and wrap chocolates. In retail and customer service, a main function of automation has been not to eliminate work, but to eliminate waged work, transferring much of the labor onto consumers, who must now weigh and code their own vegetables at the supermarket, check out their own library books, and tag their own luggage at the airport.

At the same time, it may be harder to automate some jobs that require a human touch, such as floristry or hairstyling. The same goes for the delicate work of caring for the young, sick, elderly, or otherwise vulnerable. In today’s economy, the demand for such labor is rising rapidly: “Nine of the twelve fastest-growing fields,” The New York Times reported earlier this year, “are different ways of saying ‘nurse.’” These jobs also happen to be low-paying, emotionally and physically grueling, dirty, hazardous, and shouldered largely by women and immigrants. Regardless of whether employment is virtuous or not, our immediate goal should perhaps be to distribute the burdens of caregiving, since such work is essential to the functioning of society and benefits us all.

A truly work-free world is one that would entail a revolution from our present social organizations. We could no longer conceive of welfare as a last resort—as the “safety net” metaphor implies—but would be forced to treat it as an unremarkable and universal fact of life. This alone would require us to support a massive redistribution of wealth, and to reclaim our political institutions from the big-money interests that are allergic to such changes. Tall orders indeed—but as Srnicek and Williams remind us in their book, Inventing the Future: Postcapitalism and a World Without Work, neoliberals pulled off just such a revolution in the postwar years. Thanks to their efforts, free-market liberalism replaced Keynesianism as the political and economic common sense all around the world.

Another possible solution to the current miseries of unemployment and worker exploitation is the one Livingston rejects in his title: full employment. For anti-work partisans, full employment takes us in the wrong direction, and UBI corrects the course. But the two are not mutually exclusive. In fact, rather than creating new jobs, full employment could require us to reduce our work hours drastically and spread them throughout the workforce—a scheme that could radically de-center waged work in our lives. A dual strategy of pursuing full employment while also demanding universal benefits—including health care, childcare, and affordable housing—would maximize workers’ bargaining power to ensure that they, and not just owners of capital, actually get to enjoy the bounty of labor-saving technology.

Nevertheless, Livingston’s critiques of full employment are worth heeding. As with automation, it can all go wrong if we use the banner of full employment to create pointless roles—what David Graeber has termed “bullshit jobs,” in which workers sit in some soul-sucking basement office for eight hours a day—or harmful jobs, like building nuclear weapons. If we do not have a deliberate politics rooted in universal social justice, then full employment, a basic income, and automation will not liberate us from the degradations of work.

Both Livingston and Anderson reveal how much of our own power we’ve already ceded in making waged work the conduit for our ideals of liberty and morality. The scale and coordination of the institutions we’re up against in the fight for our emancipation is, as Anderson demonstrates, staggering. Employers hold the means to our well-being, and they have the law on their side. Individual efforts to achieve a better “work-life balance” for ourselves and our families miss the wider issue we face as waged employees. Livingston demonstrates the scale at which we should be thinking: Our demands should be revolutionary, our imaginations wide. Standing amid the wreckage of last year’s presidential election, what other choice do we have?

Miya Tokumitsu is a lecturer of art history at the University of Melbourne and a contributing editor at Jacobin. She is the author of Do What You Love. And Other Lies about Success and Happiness.