By Doug Johnson Hatlem

Source: CounterPunch

At the end of the climactic scene (8 minutes) in HBO’s Emmy nominated Hacking Democracy (2006), a Leon County, Florida Election official breaks down in tears. “There are people out there who are giving their lives just to try to make our elections secure,” she says. “And these vendors are lying and saying everything is alright.” Hundreds of jurisdictions throughout the United States are using voting machines or vote tabulators that have flunked security tests. Those jurisdictions by and large are where former Secretary of State Hillary Clinton is substantially outperforming the first full wave of exit polling in her contest against Senator Bernie Sanders.

CounterPunch has interviewed hackers, academics, exit pollsters, and elections officials and workers in multiple states for this series taking election fraud allegations seriously. The tearful breakdown in Hacking Democracy is not surprising. There is a well-beyond remarkable gap between what security experts and academics say about the vulnerability of voting machines and the confidence elections experts and academics, media outlets, and elections officials place in those same machines.

In Leon County, Bev Harris’ Black Box Voting team had just demonstrated a simple hack of an AccuVote tabulator for bubble-marked paper ballots. Ion Sancho, Leon County’s Supervisor of Elections, also fights back tears in the Hacking Democracy clip: “I would have certified this election as a true and accurate result of a vote.” Sancho adds, “The vendors are driving the process of voting technology in the United States.”

In 2010, and this reminder will pain those of you who can remember when Nate Silver’s outfit did real data journalism rather than primarily yay-Clinton boo-Trump punditry, a FiveThirtyEight column argued that hacking was one of two possibilities for statistical anomalies in a Democratic Senate primary in South Carolina: “B. Somebody with access to software and machines engineered a very devious manipulation of the vote returns.”

Joshua Holland’s column in The Nation “debunking” claims of election fraud benefiting Clinton rests its case on a simple proposition: why would Clinton need to cheat when she was winning anyway? Apparently, Mr. Holland has never heard of an obscure American politician named Richard Nixon.

More importantly, entering the South Carolina primary, the pledged delegate count was 52-51. CNN’s poll two weeks out projected an 18 point Clinton win. Ann Selzer, the best pollster in the United States, projected a 22 point Clinton win. RealClearPolitics’ polling average projected a 27.5% win. FiveThirtyEight was much bolder in projecting a 38.3% Clinton win. The early full exit poll said Clinton had won by 36%, pretty close to FiveThirtyEight’s call. Tellingly, white people in that exit poll went for Sanders 58-42. But the final results said Clinton won by 47.5%, an 11.5% exit polling miss. And the exit polls had to adjust their initial figures to a 53-47 Clinton win with white Democrats in South Carolina.

Three days after South Carolina’s primary, Clinton seriously outperformed her exit polling projections again in a bunch of states on Super Tuesday, including Massachusetts where she went from a projected 6.6% loss to a 1.4% win. Super Tuesday set the narrative that Sanders had no chance of beating Clinton in pledged delegates.

Correlating Exit Polling Misses and Bad Machines

Let’s be clear: yes, correlation does not equal causality. What strong correlation does do, however, is set the agenda for reasonable investigation. Mocking fraud claims where there is a strong correlative case and actual evidence of potential vote tampering in places like Arizona, New York, and Chicago is precisely the kind of thing that has seen confidence in media outlets plummet to an all-time low. Just 6% of people in the U.S., about the same number as for Congress, have high confidence that media are unbiased and accurate.

Meanwhile, according to a September 2015 study (.pdf) by the Brennan Center for Justice at New York University’s School of Law, South Carolina uses all machines more than ten years old. In fact, drawing on the source of the Brennan Center report over at Verified Voting, South Carolina uses provably hackable voting machines without a verified paper trail. Virtually all counties in South Carolina use two machines in particular – Electronic Systems and Software’s (ES&S) iVotronic, a touch screen voting machine without a paper trail, and ES&S’s Model 100, used to tabulate absentee and provisional ballots.

Kim Zetter, the best reporter on hacking and computer security at Wired Magazine, delved into the Brennan Center report with an article entitled “The Dismal State of America’s Decade-Old Voting Machines.” Zetter noted that in 2002, after the Bush v. Gore disaster, Congress passed the Help America Vote Act (HAVA) with billions of dollars available for counties throughout the U.S. to upgrade to new voting machines. Zetter then hits the critical point for discussion of election fraud allegations in the Democratic presidential primary:

But many of the machines installed then, which are still in use today, were never properly vetted—the initial voting standards and testing processes turned out to be highly flawed—and ultimately introduced new problems in the form of insecure software code and design.

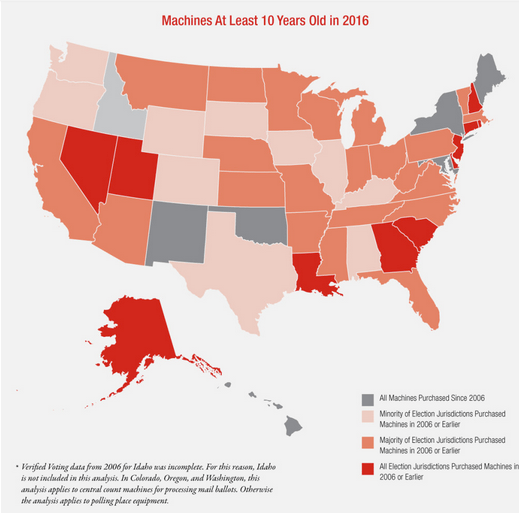

Things are dismal, yes, but they are not evenly so. As this map from the Brennan Center report shows, there are just a few states that are as bad off as South Carolina (all machines ten years old or greater). But there are also just as few states that are relatively well off with all machines newer than ten years old.

Of the nine places where the exit polling has missed by more than 7% (South Carolina, Alabama, Georgia, Massachusetts, Tennessee, Texas, Mississippi, Ohio, New York), two-thirds are states where all or the majority of election jurisdictions are using machines ten years old or greater. For these six states the average initial exit polling miss is a whopping 9.98%. From my column on exit polling misses last week, the average exit polling miss in Clinton’s favor is 5.1%. For the three states (Oklahoma, New York, Maryland) for which there is polling and for which all election jurisdictions use machines less than ten years old (gray in the map), the average is just a 1.67% miss in Clinton’s favor. Now take note, this 1.67% average includes New York with its huge miss in Clinton’s favor. Alabama is also worth looking at, with a minority of jurisdictions having machines more than ten years old, because I have been using an “Alabama Test” to see whether theories for the exit polling misses make sense.

I put figures like this to exit pollster and Executive Vice President of Edison Research Joe Lenski for question 10, which I’d previously left out of the published version of the interview I completed with him. I wanted to know whether the gap in exit polling misses raised any red flags. Here was Lenski’s reply:

The reliability of vote equipment is a true concern but I don’t see any evidence how the concentration of older voting machines in certain states would have affected either candidate more than the other. There are many examples of vote count errors. Here is a link reporting a recent vote count error in the Michigan primary that inflated Ted Cruz’s vote by 3000 votes http://uselectionatlas.org/WEBLOGS/dave/ . These types of errors are discovered all the time but there is no evidence that these are anything more than mistakes by local election officials – not a systematic attempt to affect a single candidate’s vote totals. This reminds me of theories after the 2008 New Hampshire Democratic Primary based upon the fact that Hillary Clinton did better in towns with voting machines while Barack Obama did better in towns that voted on paper. That was simply an artifact of the demographics in New Hampshire of the towns that had voting machines versus those that voted on paper. Again the states with older voting machines in 2016 may just be the same states with demographics that favored Hillary Clinton over Bernie Sanders.

But again, as I argued last Wednesday, the demographics by state and other proposed reasons for exit polling misses do not actually add up. Big misses have happened in the South, in Massachusetts, and also in Ohio where Sanders otherwise did quite well in the Midwest. Nor do age or early voting patterns predict exit polling misses. Still, what is most remarkable about Lenski’s statement is that he is one of the few non-tech experts we spoke with who recognized that the “reliability of voting equipment is a true concern.”

None of the three elections academics I spoke with for last Wednesday’s piece appeared to be familiar with the Brennan Center report on aging and vulnerable machines, and Antonio Gonzalez, an exit polling expert and Latino voter registration guru who called for parties not to seat Arizona’s delegations in last Thursday’s piece, seemed a bit floored when I presented him with question ten from my interview with Lenski. “Oh,” he said, “I thought Congress was supposed to have taken care of that with HAVA.” HAVA, as noted earlier, offered money from 2002 to 2006 for states to upgrade to the then latest and greatest voting technology.

At this point we should take a look at the proven flaws in four very old and hackable machines in particular. These machines or similar elderly and vulnerable machines are in use in almost all places where Clinton outperforms exit polling most substantially. Because I am taking evidence and counter-evidence seriously, we will also look at the machines used in New York City, which are not quite so old (about six or seven years). While those machines, ES&S’s DS200, have had several problems over the years of the type suggested by Lenski, they also have not verifiably flunked independent security tests, so far as I know.

AccuVote (TS, OS, TSX models)

AccuVote technology is among the worst of the worst. This is the Diebold technology hacked in the Hacking Democracy clip. It is more than ten years old, can be hacked in such a way that even those models (OS, TSX) with a paper trail can be tricked, and it is in use throughout Georgia (12.2% miss) and in more than 300 counties or other election jurisdictions in more than 20 states.

AVC Edge and Edge II (from my column on Chicago Friday)

The AVC Edge and Edge II (with paper trail) were provably hacked by a “Red Team” from UC Santa Barbara hired by the State of California in 2008. Jim Allen, spokesman for the Chicago Board of Elections, called and emailed to complain after my article last Friday. He dismissed the suggestion that Edge II could be hacked because of the paper trail. Not only is this laughable since his team engaged in a wildly inaccurate audit of the paper trail from the Chicago Democratic primary, but Allen apparently failed to click on the linkregarding the UCSB Red Team test that I included in the article. The first paragraph of that article notes that Edge machines, “even those with a so-called Voter Verified Paper Trail” can be successfully hacked by a single person. AVC Edge machines are in use without a paper trail throughout Louisiana (where there were no exit polls but where Clinton seriously outperformed her pre-election day polling average) and in more than 130 counties in various other states.

Model 100 (from ES&S)

Model 100 also badly flunked (.pdf) the California “Red Team” test in 2008. Like the other machines in this list, it is hackable in a way that spreads virally to other machines in the same network. Hundreds of jurisdictions still use Model 100 to tabulate votes, including especially Wayne County (Detroit), 27 counties in Ohio, 9 counties in Tennessee, 78 counties in Texas, and many more that match very well with where Clinton has outperformed exit polls.

iVotronic (ES&S)

iVotronic machines are touchscreen voting machines, many without a paper trail. iVotronic machines flunked a University of Pennsylvania test in 2007 and are the precise machines in question in the previous suspicious Democratic primary results in South Carolina in 2010. They continue to be used throughout South Carolina (no paper trail) and in hundreds of counties in states where Clinton has suspiciously overperformed exit polling.

DS200

DS200 machines have had a wide variety of malfunctioning problems, particularly in New York City, but those problems can and mostly have been addressed in places like New York City by retraining poll workers to check immediately whether each voters’ vote was counted and then offering a new chance to vote if necessary. As stated, the DS200 has not been provably hacked so far as I know. Newer machines of this sort were put into use just this year in Maryland where the overall exit polling missed in Sanders favor, for once, but by just 0.6 points. Still, the votes in Baltimore County have now been decertifiedbecause, among other things, there were more votes than voters who checked in at the polls. In Maryland, the DS200 machines are all networked to a statewide system for tabulating votes quickly. Networking, however, is not required, and my best information suggests that networking is not how the DS200 is used in New York City. Instead, precinct workers pull the results off the machine at the end of the voting day and relay them to county headquarters, according to my discussions with a poll worker from Brooklyn.

What About the Exceptions to This Correlation?

But we also would have to deal with where there are exceptions to this strong correlation between hackable machines and Clinton beating the exit polling badly. Here’s where my conversation with a particular veteran hacker comes into play. I chatted securely with a long-time member of Anonymous whom I’ll call the King of SciAm (not the handle they use publicly or privately). The King of SciAm has long worked with the Telecommix branch of Anonymous. Telecommix rose to fame when Hosni Mubarak cut off internet access in Egypt during the Arab Spring uprising. Telecommix found work-arounds via dial-up internet to keep information from activists on the ground flowing out of Egypt. As a general rule, Telecommix does not take part in Anonymous leaks or website shutdowns and defacements, but they made an exception to that rule early in this campaign cycle. Telecommix members defaced Donald Trump’s website with a tribute to Jon Stewart upon his retirement. The New Yorker’s Alex Koppleman called it the “classiest website hack ever,” a compliment the King of SciAm relishes.

The King of SciAm emphasized to me that, if hired to hack an election (which they would never do), the first thing they would do would be to figure out the best way to leave no trace: “we’d target the network packets or their headwater.” The key idea being for “a hack to survive the security audit trail after the vote is certified.” Furthermore, “we would likely try to target the thing most likely to get it’s logs wiped first – so – whatever it plugs into to move the data. Are the voting machines in use network connected?”

The King of SciAm told me that targeting old, provably hackable machines is “not an unfair theory,” but “you asked how (if we did these sort of things) we would do them.” The problem, they noted, “is that any change to the voting machine operating system or driver stack will likely be found in the security auditor’s rotation pretty quickly. This is because once the machines are down (end of election day) – they are no longer accessible to revert any source code changes or wipe any logs that said you were there, unless you’ve written STUXnet – in which case you wouldn’t be targeting the booth machines either.”

The King of SciAm was not at all surprised that sloppy hackers may be targeting older machines in places like South Carolina and Chicago, nor that elections officials were cluelessly trusting those machines and not even properly following procedures that could catch a less sophisticated hack.

So if, instead of targeting the DS200 in New York, hackers had targeted further upstream in the voting ecosystem, how would you catch it? The King of SciAm noted that you would have to use some procedure to “match 100% of the data, not 5%,” as in Chicago.

To do this, you would need to use a methodology much more like that used in the FiveThirtyEight article on irregularities in the South Carolina 2010 primary election. There, FiveThirtyEight referred to a Benford’s law test on precinct level results. That test showed an “unusual, non-random pattern in the precinct-level results suggest[ing] tampering, or at least machine malfunction, perhaps at the highest level.”

Intriguingly, after I began this series on election fraud allegations, a reader who would like to remain anonymous, emailed to point out similar irregularities in New York’s Democratic primary this year:

Results for Kings County and Bronx county [show] deviation from perfect 60-40 and 70-30 results was the same 0.035% The increase in votes in Kings (Brooklyn) from 2008 is incredible, almost a perfect 10%. Not only that but that’s where over a 100,000 voters lost their right to vote. Another 20,000 votes in Kings would mean almost a 20% increase which would be amazing compared to other counties that experienced decreases or mild increases.

Furthermore, the overall results in New York, as announced on election night, deviated from a perfect 58-42 split “by 0.005345%. That’s 97 votes out of over 1.8 million.” Will FiveThirtyEight apply a Benford’s law test to 2016 primary results? Not a chance. They have boosted Clinton throughout and are already quite embarrassed by how badly they missed on the GOP side with Donald Trump.

But what about our test? The “Alabama Test.” What’s good for the goose is good for the gander. Alabama only has a minority of jurisdictions using old, provably hackable machines. Is that a weak correlation for the theory that in most places sloppy hackers targeted old, provably vulnerable machines while apparently more sophisticated hackers would have had to have been involved with targeting New York’s results as well as registration switching operations in a wide variety of states?

Taking a look at Alabama on a county level gives us a fairly strong answer. Most of Alabama’s counties also use hand cast ballots tabulated by the DS200, but a minority use Model 100, one of our flunked election machines. Three of the flunked Model 100 counties, however, are three of the four biggest counties in Alabama (Jefferson, Mobile, and Montgomery) and accounted for around 40% of the vote for Democrats in Alabama. Clinton won by a 64.2% spread in Jefferson, by 66.5% in Mobile, and by a stunning 73.4% in Montgomery. What happened in Madison, the one county of the top four by population that votes using the DS200 model? Clinton won by just a 38.5% spread! In fact, Clinton did not make it to 80% of the vote in any of the top twelve counties by population except for those three counties using Model 100 to tabulate votes.

And controlling for factors like African American voters or wealth does not account for this phenomenon. Take for instance Mobile where the population is 35.3% black versus a 24.6% black population in Madison County. A 10% difference in black population does not account for a 28% difference in the Clinton-Sanders spread. What’s more, if you compare Mobile to a very similar county in North Carolina (where the exit polls did not really miss), you see something similarly telling.

Cumberland County, NC is very comparative to Mobile, Alabama. They have similar populations, similar numbers of black residents (with Cumberland slightly higher at 37.6% African American), very similar per capita income figures, and both counties had about 35,000 Democratic voters. Clinton won Cumberland by 32.8%, very close to the Madison County (DS200 model) results and about half the percentage spread Clinton saw in Mobile (Model 100).

Of the theories we have so far for why exit polling missed in Alabama by a huge 14%, the only theory that provides a reasonable explanation is vote tabulating machine tampering. Now, perhaps someone else will come up with a non-fraudulent exit polling miss theory that passes the Alabama Test and explains other states as well. Such a theory cannot be about early voting (Alabama had none) and over-projecting young voters (there were very few according to exit polls of Alabama).

Until someone comes up with such a workable theory, election fraud benefiting Hillary Clinton to the tune of a 120 to 150 pledged delegate difference, is the best explanation we have. People wanting to prove this theory should be suing for a technologically sophisticated and independent review of results and the voting results’ entire computer ecosystems in places like Ohio, South Carolina, Alabama, Boston, Chicago, New York, and many others.

Part 1: Taking Election Fraud Allegations Seriously

Part 2: Debunking Some Election Fraud Allegations

Part 3: In-depth Report on Exit Polling and Election Fraud Allegations

An Interview With Lead Edison Exit Pollster Joe Lenski

Part 4: Purged, Hacked, Switched

Part 5: Chicago Election Official Admits “Numbers Didn’t Match”

Pingback: 113 ♠♣♠ Welcome to history WW3 Secretary FUBAR Hillary “Richardnixon” Clinton …your super-delegate click-bait covers Donald Trump’s ammo dump | greenenvscithr

Reblogged this on OCCUPY AMERICA and commented:

Killer E. Clinton leaned from the same cabal that anointed the Bush Dynasty how to rig the system .

Pingback: Clinton Does Best Where Voting Machines Flunk Hacking Tests: Hillary Clinton vs. Bernie Sanders Election Fraud Allegations | Viewing Every Reality

Reblogged this on Viewing Every Reality.